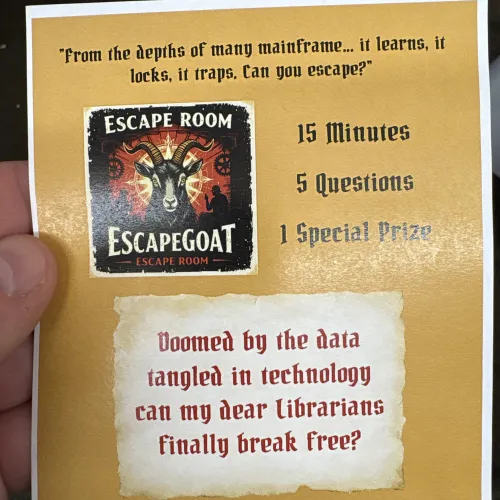

This is definitely one of the most interesting AI projects that I've been fortunate enough to work on! ScapeGoat, the AI Powered Escape Room for librarians, was demo'ed at the TLN Library Technology Forum in Michigan. This project was the brainchild of two former students of mine: Michael McEvoy of Northville [MI] Public Library and Shauna Quick, Library of Michigan. I helped with prompt design and providing the back-end infrastructure used to run the escape room game and scoring system!

Essentially, the goal of the project was to introduce library attendees to AI by having them participate in a chatbot-powered virtual "escape room." The chatbot would act as game-master, eventually helping the participants solve logic puzzles. Once they solved enough puzzles, they would "be released" from the room.

I don't know about Michael and Shauna, but I had an absolute blast working on this project. I don't often get to work on puzzles or games for work, so I was eager to help.

AI Can't Reliably Generate, Judge Nor Solve Logic Problems

So there has been a ton written about the "reasoning" capability of modern Large Language Models (LLMs) but our first problem was the expectation that the AI could just give logic puzzles to the participants based on, essentially, the prompt "Administer logic problems of increasing complexity to participants." Most of our LLM testing indicated that the LLM could provide some basic common logic puzzles but it couldn't "rank" them in order of human complexity. Also, it was hard to get the LLM to provide a puzzle hint that was helpful without giving away the answer. We agreed that we'd need a more sophisticated approach.

We ended up using a "Question Bank" knowledge document. In AI terms, we provided "knowledge" to the AI: a list of logic puzzles and the "difficulty level" of each puzzle. Now, instead of telling the AI "think of a logic puzzle that a human would find easy," we tell the AI "select a logic puzzle from this list of human-approved easy puzzles and give it to the participants." Much better results!

Getting Agentic With It

Initially, the group hoped that the AI itself could handle the technical aspects of game management, beyond just the logic puzzle stuff. Specifically, we wanted the AI to track how many problems the humans had correctly solved in any given game, and we wanted to impose a 15-minute time limit to keep the activity moving for the conference attendees playing.

But LLMs don't have any sense of time -- they don't care if it takes you one minute to respond, or if you respond tomorrow! So they can't track a time limit at all. In addition, we found that it was hard with mid-2025 AI models to have them reliably keep track of a 5-point scoring scale through the entire conversation.

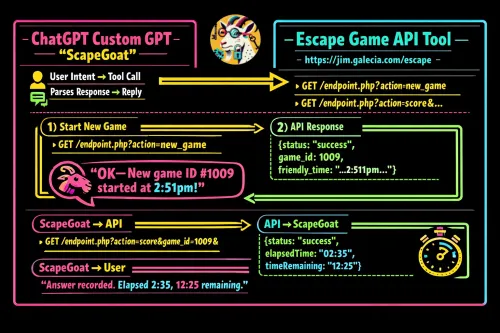

Here's where I got to really help: we gave our AI a "tool" and told it how to use it. This is often called "agentic" AI or turning a simple chatbot into an "agent" capable of doing stuff! Specifically, we told it that it could communicate with a scoreboard application to help it manage the escape room games. You can check out our specific script below, but essentially we gave it two tools in its toolbox:

- StartNewGame command started a new game countdown on the score server (and displayed it on the scoreboard). It sent the game ID # back to the chatbot so that it could reference that game for its duration.

- SubmitScore command notified the score server that the users had answered a question successfully. The server would note the score and the time remaining in the game and tell the chatbot. The chatbot was able to successfully interpret the time and score to tell the users useful info, like "OK, that's 3 out of 5 correct. You need two more correct and you have 6 minutes left!"

This ended up being the secret sauce that allowed this escape room concept to be a success!